What Is Ceph Object Storage?

Ceph is an open-source distributed storage platform whose RADOS Gateway (RGW) exposes an S3-compatible object storage API. OurClone can connect to any Ceph cluster reachable through RGW for sync, transfer, encrypted backup, and mount workflows.

Official website: https://ceph.io/

- Open-source, self-hosted, or managed by a third party — limits depend on the deployment.

- Connects through RADOS Gateway S3-compatible endpoint with Access Key + Secret Key.

- Useful as an on-premise destination for OurClone encrypted backup repositories.

What OurClone Supports for Ceph Object Storage

| Operation | How it works in OurClone |

|---|---|

| Sync | Keep a folder on Ceph Object Storage synchronized with another cloud storage account or a local folder. OurClone compares file changes and updates only what needs to change. |

| Transfer | Copy or move files between Ceph Object Storage, your Mac, and other connected cloud providers. Transfers run through your local computer, so you stay in control of the data path. |

| Backup | Create an encrypted backup repository on Ceph Object Storage, then save snapshots of local folders. Later snapshots are incremental, so unchanged files do not need to be uploaded again. |

| Mount | Mount Ceph Object Storage as a local directory in macOS or Windows, then browse and manage remote files from the operating system file manager. |

Ceph Object Storage Upload and Download Limits

These limits come from the storage provider or protocol, not from OurClone. OurClone still has to respect provider file-size caps, API quotas, bandwidth rules, account storage quota, and server-side throttling.

| Upload limits | Ceph RGW supports S3-style multipart uploads, with part sizes typically between 5 MiB and 5 GiB and up to 10,000 parts per object. Final per-object and bucket caps are set by the cluster operator and are not fixed by the protocol. |

| Download limits | Downloads from Ceph RGW are bound by cluster bandwidth, gateway rate limits, request authorization, and any quota the operator applies to the user or bucket, rather than a small per-download cap. |

| OurClone tip | Ask the Ceph cluster administrator about per-user quotas, RGW rate limits, and recommended part sizes before scheduling large OurClone jobs. |

How to Add Ceph Object Storage in OurClone

Ceph Object Storage connects through S3-compatible access keys in OurClone.

- Ask your Ceph administrator (or use

radosgw-admin user create) for an Access Key ID, Secret Access Key, and the RGW endpoint URL. - Confirm the bucket name and, if your gateway uses one, the region or zone identifier.

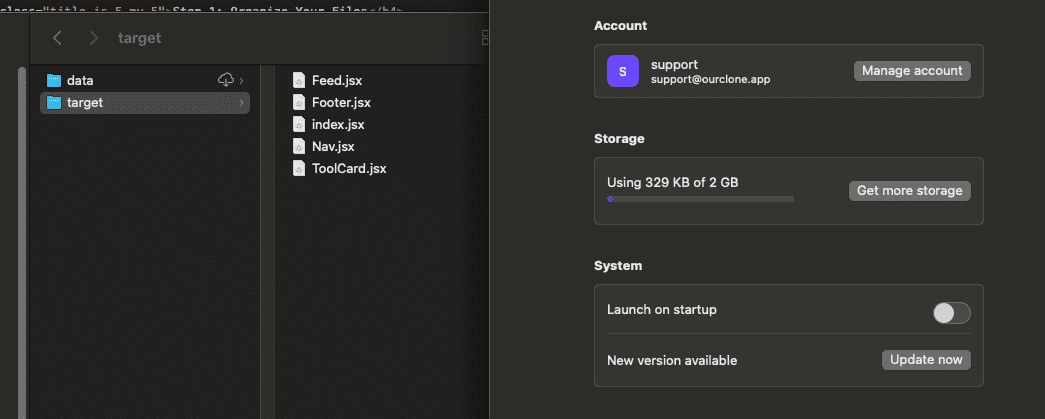

- Open OurClone, click Add Storage, and select Ceph Object Storage (or the S3 Compatible option).

- Enter the endpoint URL, Access Key ID, Secret Access Key, region (if required), and bucket, then connect.

Sync and Transfer Workflow

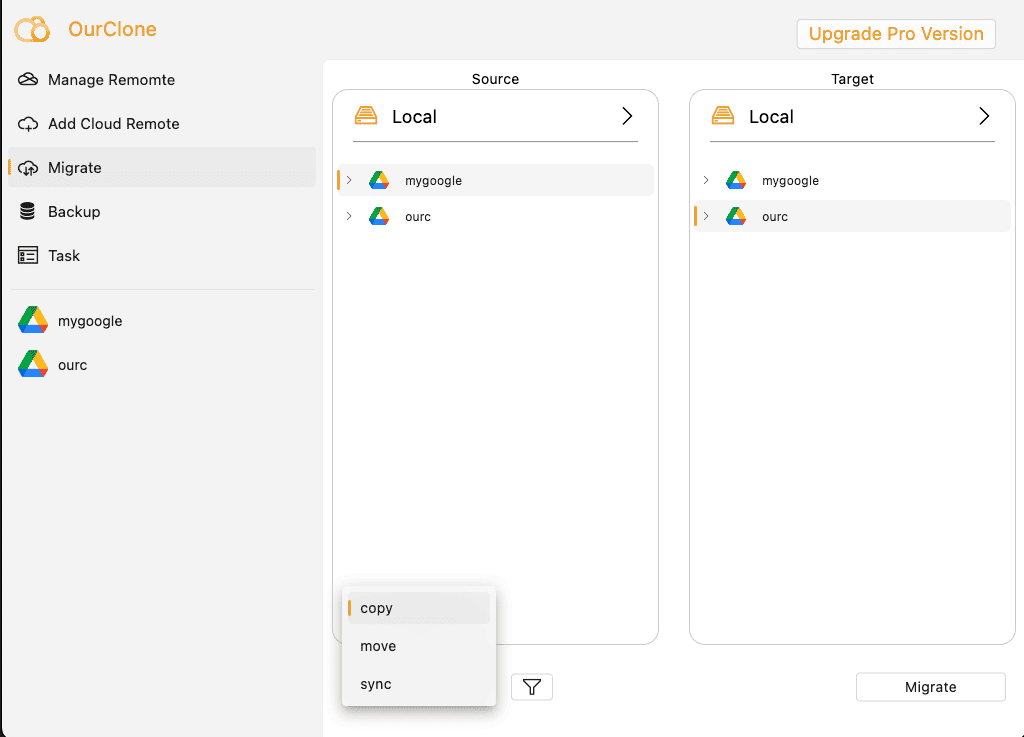

After Ceph Object Storage is connected, open the Migrate area in OurClone. Choose Ceph Object Storage as the source or destination, select the folders you want to work with, then choose the task mode.

- Copy duplicates files while keeping the source unchanged.

- Move transfers files and removes them from the source after completion.

- Sync keeps the destination aligned with the source folder.

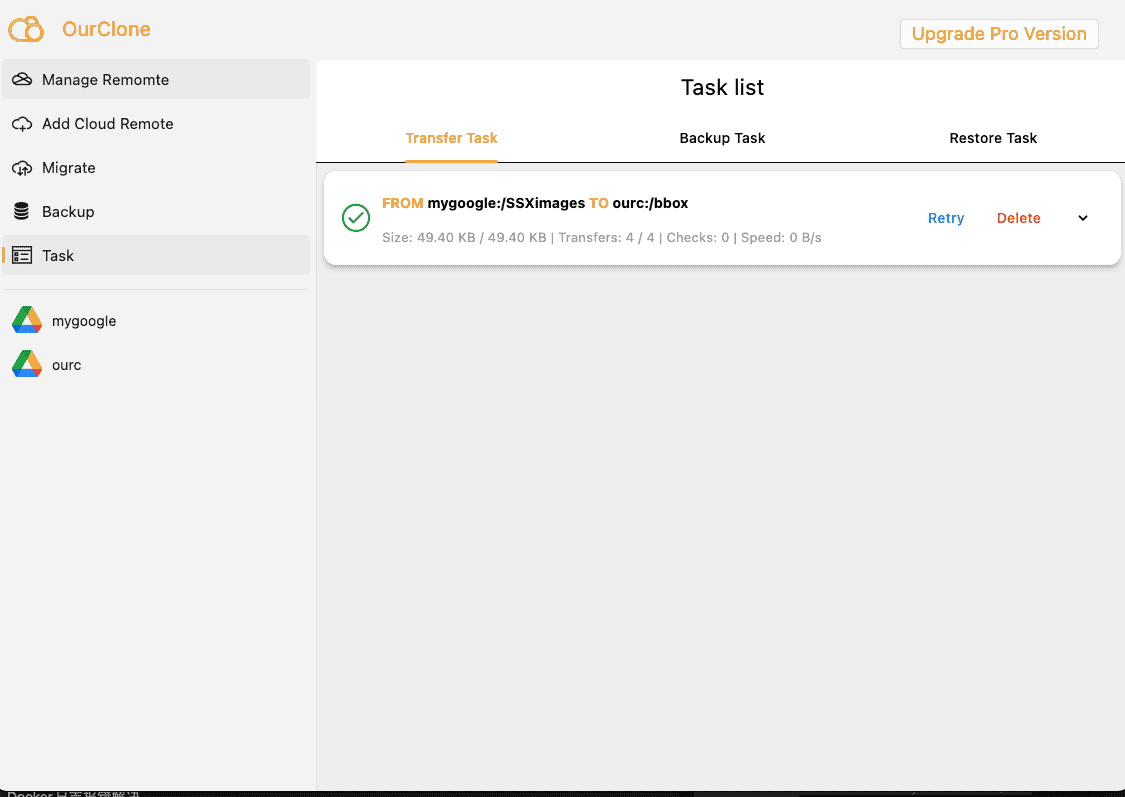

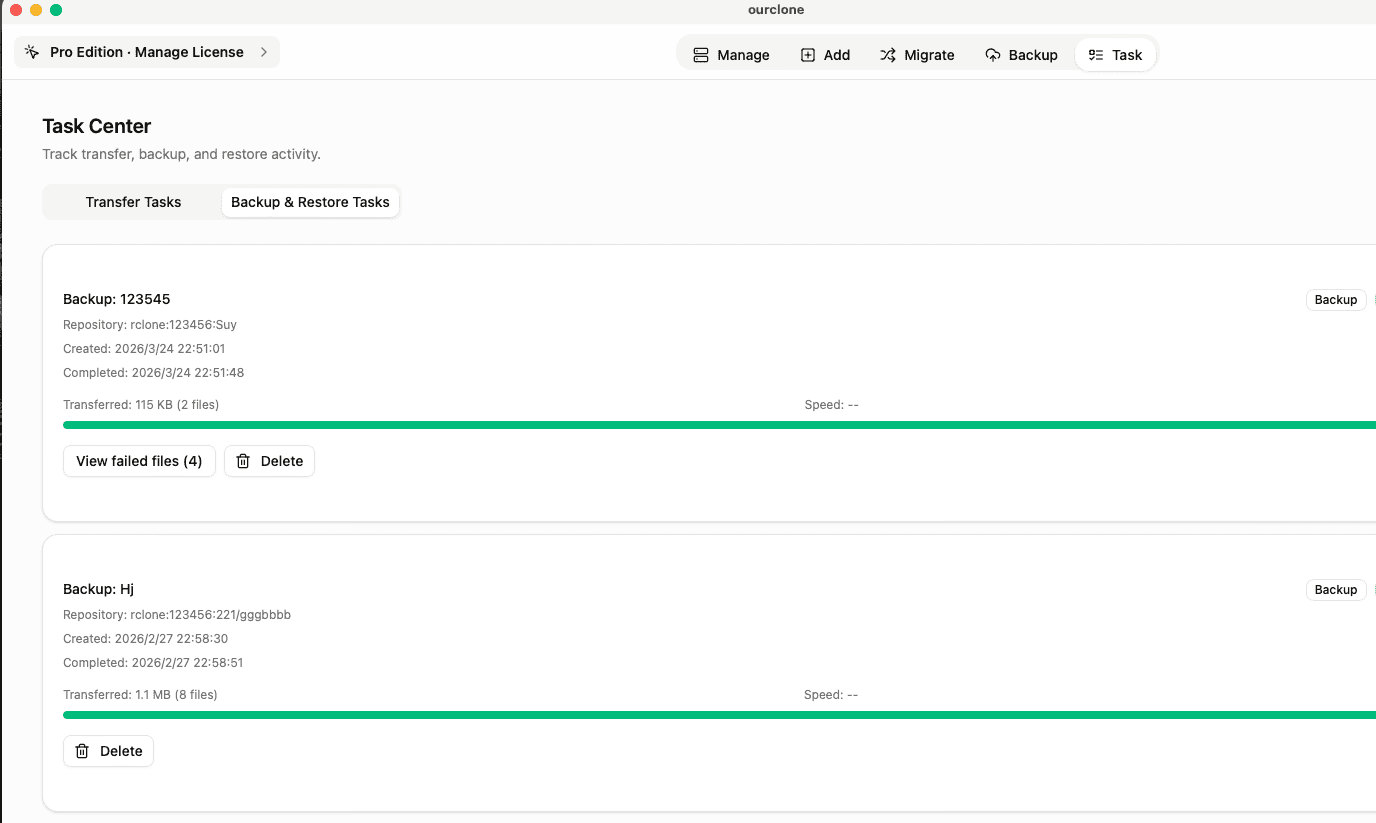

Task progress is visible in the Task tab, including completed, skipped, and failed files.

Backup Workflow

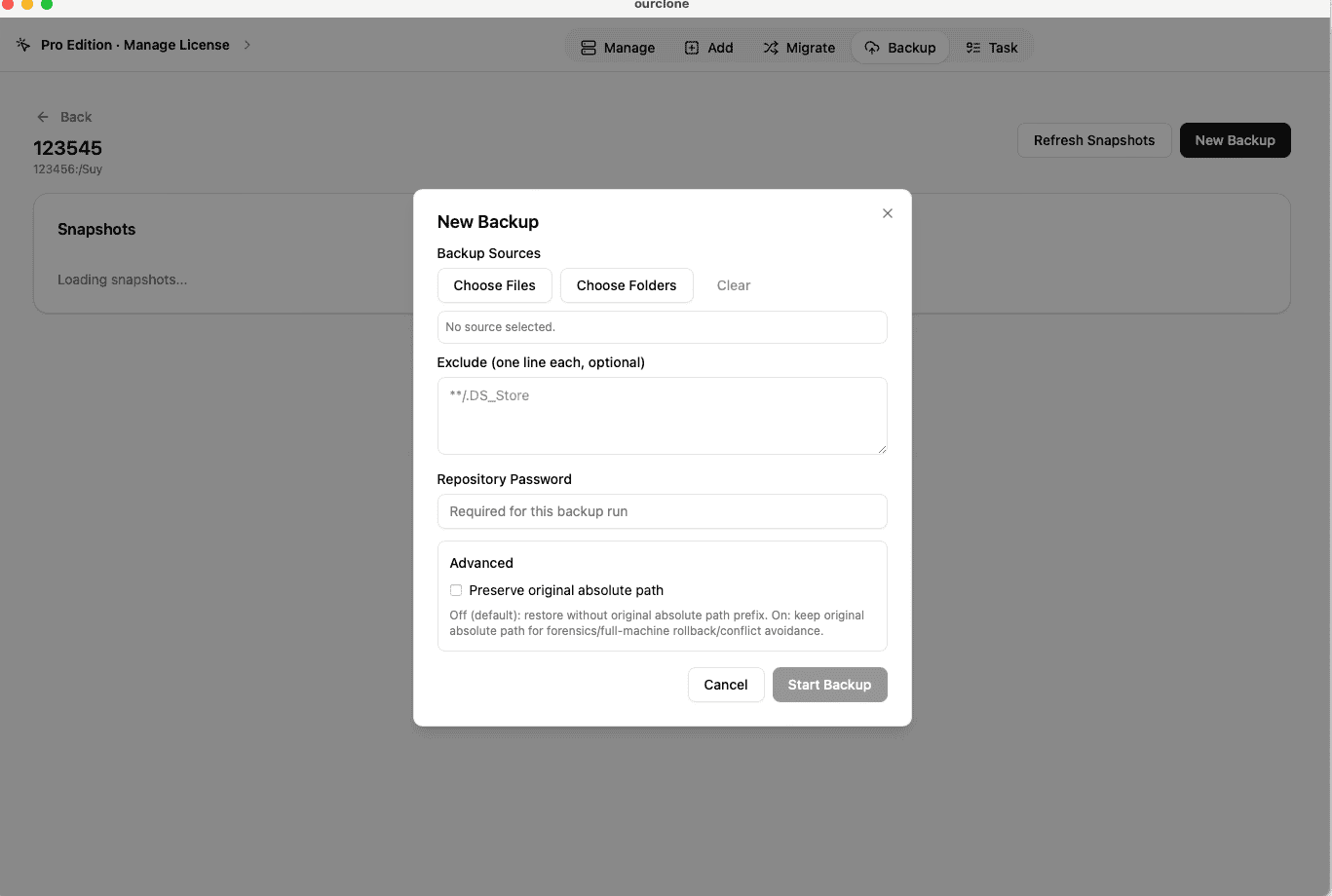

For backups, first create a backup repository on Ceph Object Storage. A repository needs a name, a storage path, and an encryption password. Keep this password safe because it is required for both future snapshots and restores.

- Open Backup and create or choose a repository on Ceph Object Storage.

- Open the repository and click New Backup.

- Select local folders such as

~/Documents,~/Pictures, or a project folder. - Start the snapshot. The first run uploads the full selection; later runs are incremental.

- Use Restore from a backup record when you need to recover files to a local directory.

Mount Ceph Object Storage as a Local Folder

OurClone can mount Ceph Object Storage as a local operating-system directory. This is useful when you want to browse cloud files in Finder or File Explorer, open files from desktop apps, or copy files with the same habits you use for local folders.

- Open the mount area in OurClone and select your connected Ceph Object Storage account.

- Choose the remote path you want to expose locally.

- Pick a local mount point, then start the mount.

- When finished, unmount cleanly from OurClone before disconnecting your network or shutting down.

Best Practices for Ceph Object Storage

- Use a clear folder naming convention such as

/ourclone-backups,/sync, or/archive. - Confirm that your Ceph Object Storage account has enough storage before running a large migration or backup.

- Run a small test sync or restore before relying on a new workflow for important files.

- For large first-time jobs, keep your computer awake and connected to a stable network.

- Confirm the RGW endpoint is reachable from your network and has a valid TLS certificate before scheduling production tasks.

- If authentication fails later, ask the operator to refresh the user keys before restarting the task.