Why a Private Ceph Cluster Is a Strong Backup Home

Ceph's RADOS Gateway (RGW) turns a self-managed Ceph cluster into an S3-compatible object store. For organisations that already run Ceph for primary or secondary storage, that means Mac backups can land on infrastructure you already operate -- without sending data to a third-party cloud.

- 🏢 Storage You Already Own -- The bucket lives on your hardware, on your network, under your team's policies. There is no vendor pricing page that quietly changes underneath your backup workflow.

- 🔧 Faithful S3 Behavior -- RGW exposes the S3 API, so OurClone treats Ceph the same way it treats any hosted S3 service -- access key, secret, endpoint, bucket path.

- 🔐 Encryption On Top of Cluster-Side Controls -- Ceph can encrypt at rest on its own, and OurClone encrypts the repository on your Mac before upload. Even an admin browsing RGW objects only sees opaque encrypted data.

- 📦 One Cluster, Many Repositories -- Per-user RGW credentials let you give each Mac its own scoped key, and each user can keep multiple OurClone repositories in the same bucket.

- ⚡ LAN-Speed First Snapshots -- When the cluster sits inside your office or DC network, the first big snapshot finishes much faster than it ever would across the public internet.

Why Incremental Snapshots Matter Even on Your Own Cluster

Just because the storage is yours does not mean you should waste it. Re-uploading the same project folders every night fills cluster capacity that other workloads also need -- and slows down erasure-coding, replication, and scrub windows on the underlying pool.

OurClone runs the first snapshot in full, then transfers only changed data on each later run. The Ceph bucket grows roughly with the new content you actually create, not with daily duplicates of the same archive.

For a private cluster shared between multiple teams, smaller incremental snapshots are also a good neighbour move -- one Mac's backup window stops swallowing the cluster's available throughput night after night.

- 🚀 Cuts upload time on every run after the first snapshot

- 💾 Keeps cluster usage proportional to actual changes

- 🔐 Each incremental snapshot still goes through the encrypted repository

- 📅 Lets you walk back through snapshots and restore an older version

Get the Ceph Side Ready Before You Back Up

Most "the Mac cannot connect" reports against Ceph come down to RGW endpoint reachability or credential scope. Sort both before you open OurClone.

- 🌐 Confirm the RGW S3 Endpoint -- The endpoint is required for Ceph in OurClone. Get the exact RGW URL from your cluster admin (something like

https://s3.example.internal) and confirm DNS, certificate, and firewall rules let your Mac reach it. - 🔑 Create a Backup-Only RGW User -- Ask your admin (or run

radosgw-admin user createyourself) for a dedicated RGW user with S3 access keys scoped to a specific bucket. Avoid reusing a service user that other workloads depend on. - 📁 Pick the Right Folders -- Focus on folders that would actually hurt to lose:

~/Documents,~/Pictures, code projects, and external drive folders. Skip caches and dependency directories. - 🔌 Check VPN or LAN Reachability -- If the RGW endpoint is internal-only, your Mac must be on the LAN or connected through a VPN before backups can run. Test a quick

curlagainst the endpoint to confirm reachability. - 🧪 Start Small -- Run the first OurClone snapshot against a small folder so you can confirm the RGW endpoint, credentials, bucket scope, and restore flow before committing a multi-gigabyte archive.

Backing Up macOS Folders to a Ceph Cluster With OurClone

Once your RGW endpoint and access key are ready, the rest happens entirely inside OurClone. Five steps cover everything from connecting Ceph to restoring a file.

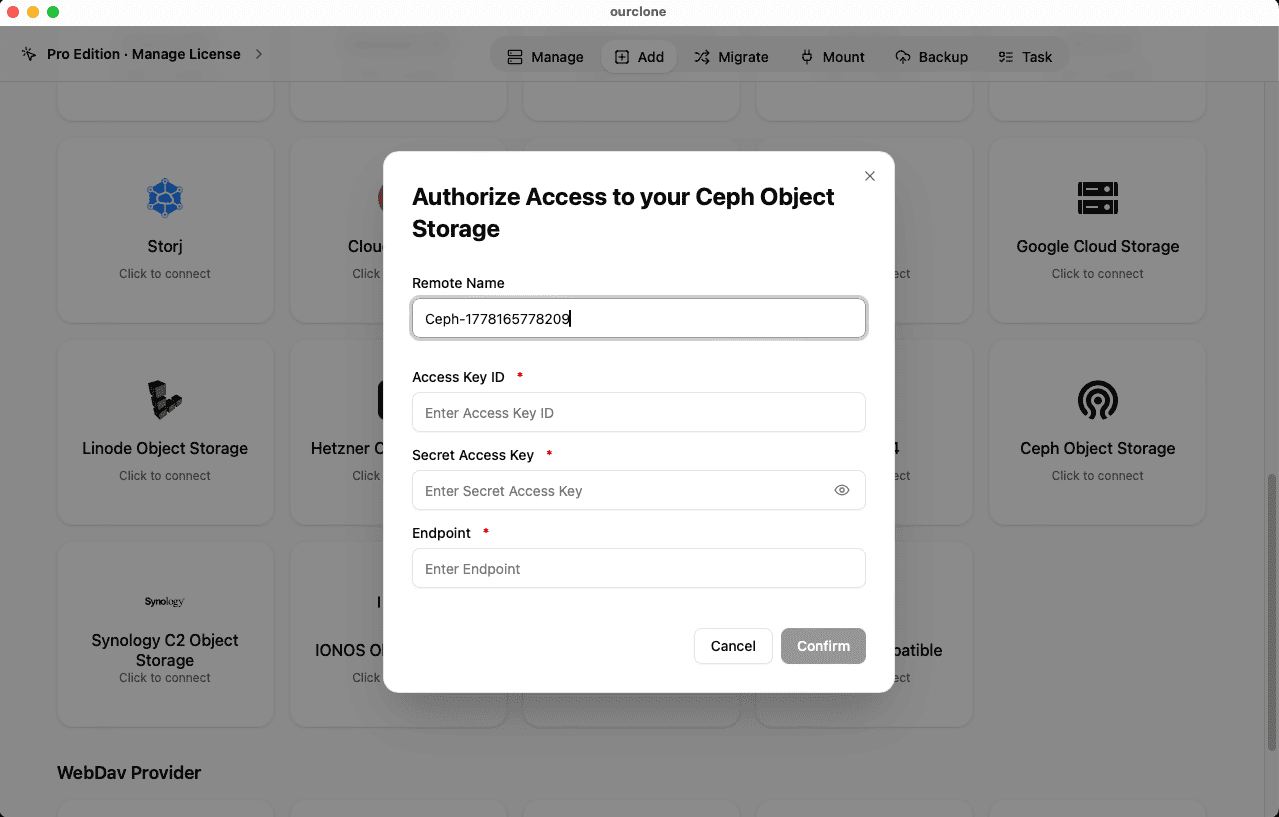

- 🔗 Add Ceph Object Storage in Add Storage -- In OurClone, open

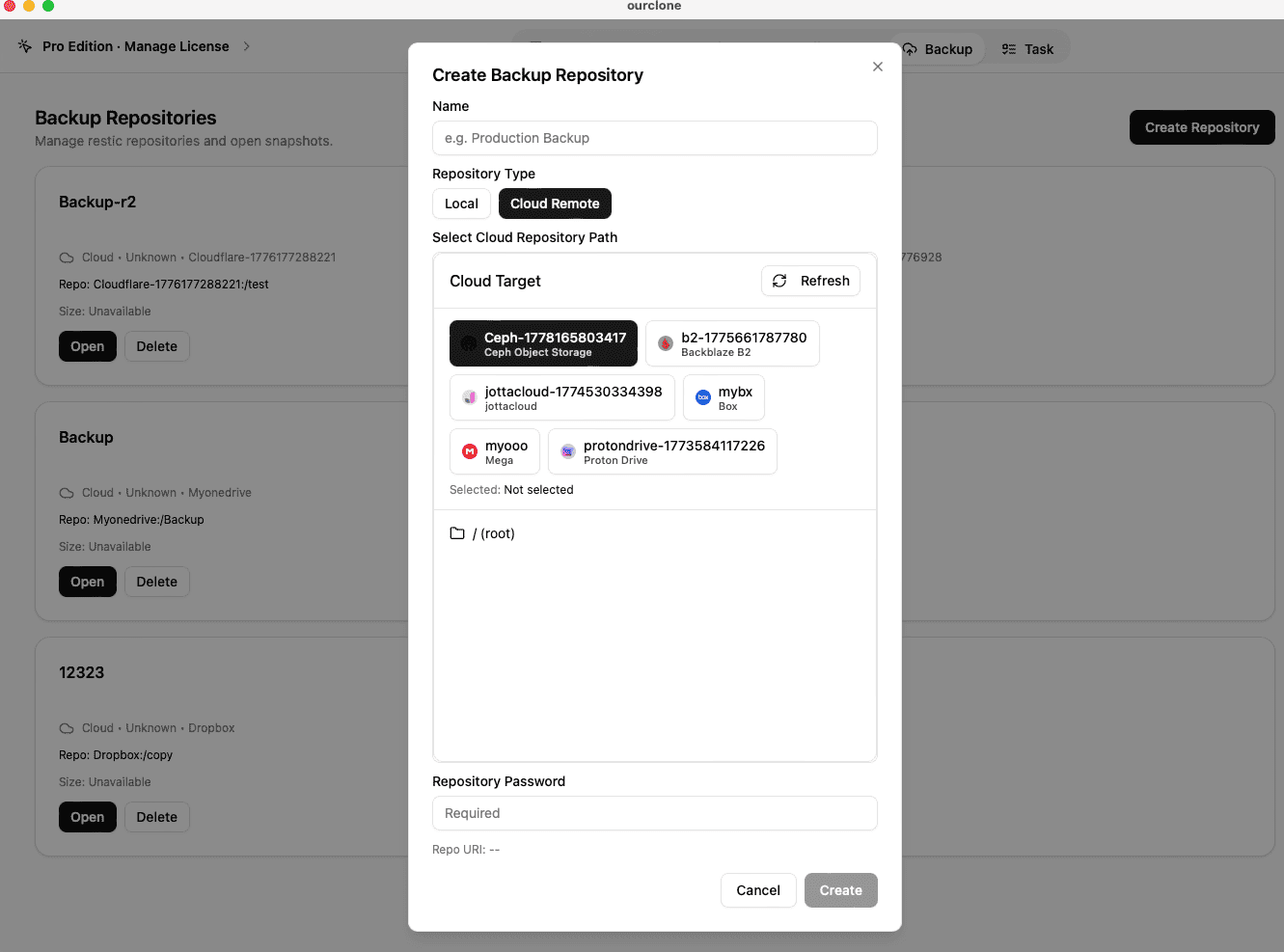

Add Storageand pick Ceph Object Storage. Give the connection a custom name like "Internal Ceph -- Mac Backup", then paste the RGW user's Access Key ID and Secret Access Key. Add the RGW S3 endpoint exactly as it appears in your cluster docs (including protocol, host, and port if non-standard). Save the connection. - 📦 Create a Backup Repository in Your Ceph Bucket -- Open the

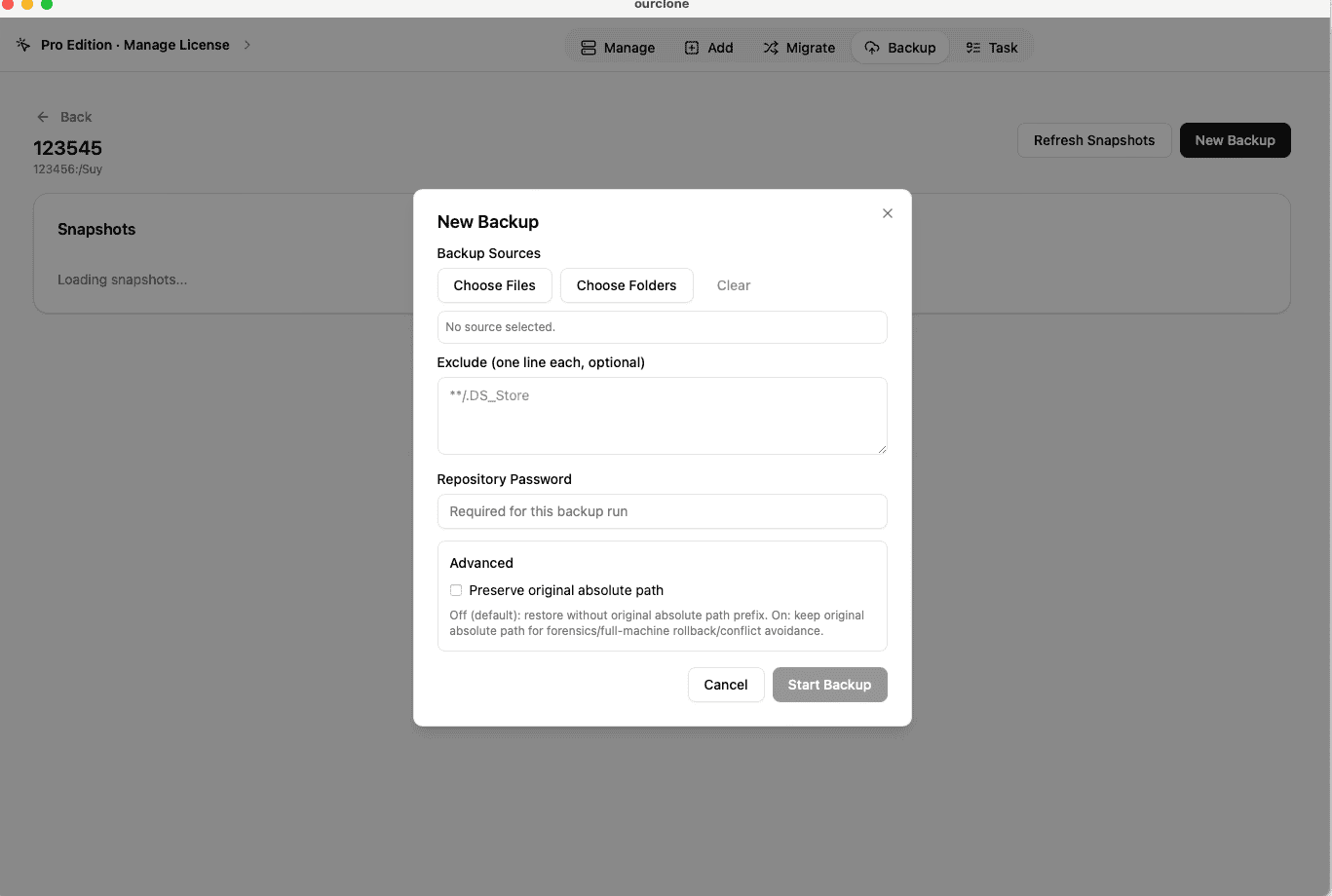

Backuptab and create a new repository. Choose your Ceph connection as the destination, point it at a path inside the bucket the RGW user owns (for examplebackups/mac-laptop), give the repository a clear name, and set a strong repository password. That password encrypts the repository and is required for every snapshot and restore -- save it in a password manager. - 🗂️ Snapshot Local Folders -- Open the new repository and create a snapshot. Pick macOS folders such as

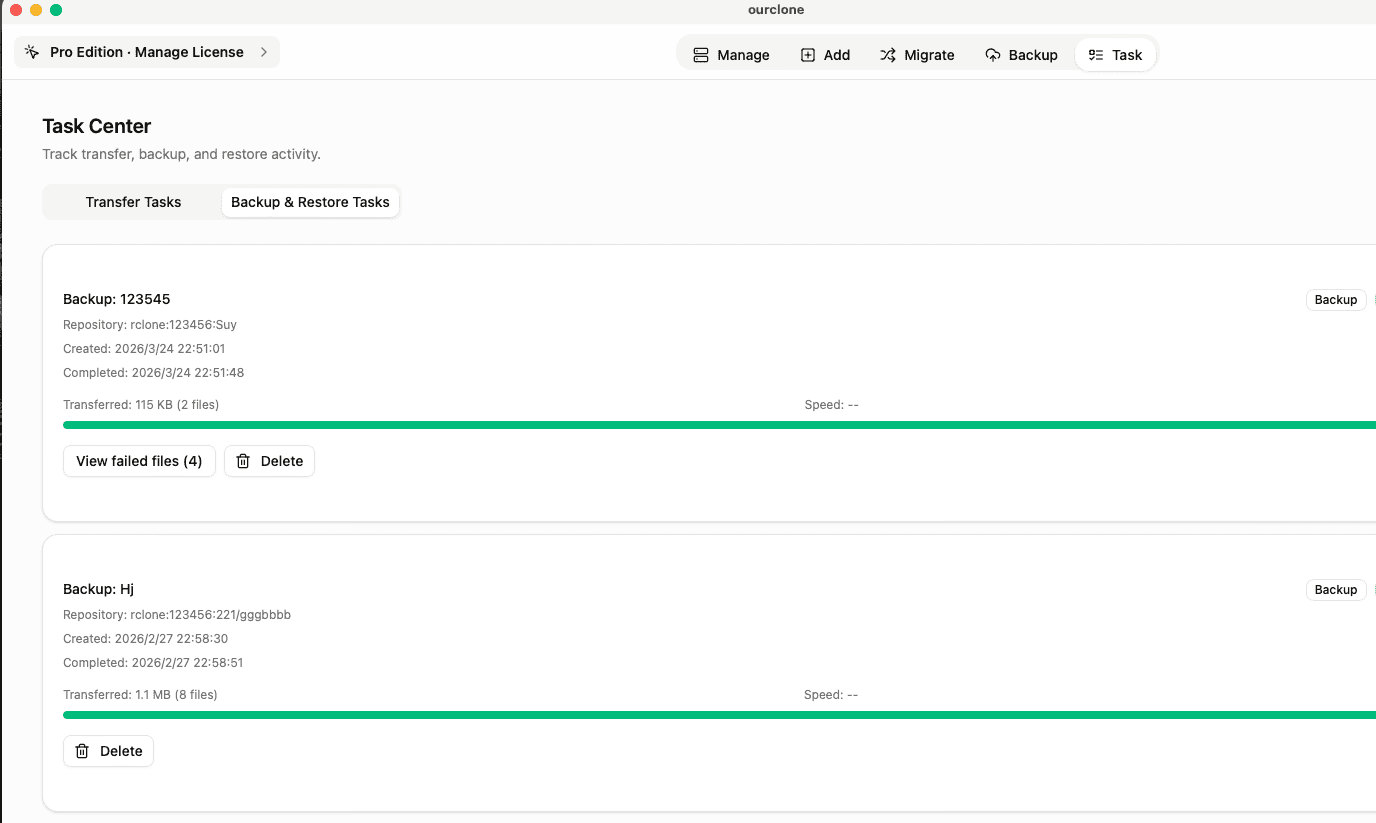

~/Documents, a project tree, or an external drive folder. OurClone packages, encrypts, and uploads the data into your Ceph bucket. The first run is a full snapshot; later runs of the same folders are incremental. - 🕒 Watch It Run From Task -- Backup & Restore -- Open the

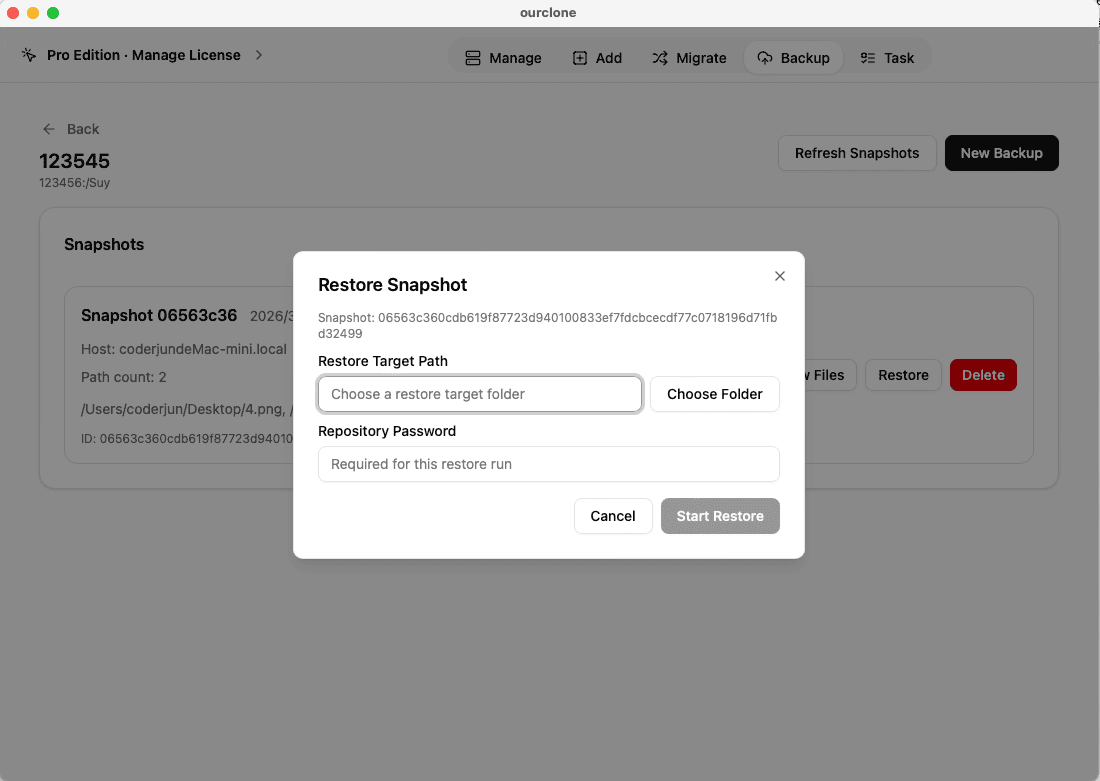

Tasktab and switch toBackup & Restore. The active Ceph task shows progress, throughput, and any warnings. Chunked uploads keep the snapshot moving even when the cluster is busy with other RGW workloads. - 🔁 Restore From a Snapshot -- In the Ceph repository, pick the snapshot with the files you need, click

Restore, enter the repository password, and choose a local destination. OurClone decrypts the data and writes the files back. You can restore one folder, a subset, or the whole snapshot.

Because OurClone speaks plain S3 to RGW, the workflow keeps working if you later move the cluster behind a different DNS name or upgrade the gateway -- you only need to update the endpoint.

Confirm Your Ceph Backup and Keep It Healthy

A self-hosted backup is only as trustworthy as the operator who watches it. A short check-in routine keeps the Ceph backup honest.

- 📄 Check Task Status After Each Run -- In

Task->Backup & Restore, confirm the latest Ceph task finished cleanly. Repeated failures usually point at the RGW endpoint, the access key, or a network change between your Mac and the cluster. - 🧩 Read Skipped File Notes -- macOS file permissions can block OurClone from reading specific files. The task log lists which files were skipped, so you can grant Full Disk Access or move the file and re-run.

- 📜 Inspect the Detailed Log -- Open a finished Ceph task to see which files were new, which were unchanged, and how much data the incremental run uploaded. That helps you spot folders that suddenly grew.

- 🔐 Treat the Repository Password as Critical -- The Ceph bucket only stores encrypted repository data. Without the repository password, even a cluster admin with full bucket visibility cannot read your snapshots.

Watch for Endpoint, DNS, and Certificate Drift

Self-hosted clusters change. The RGW endpoint can move behind a new hostname, certificates can rotate, and RGW users can be regenerated as part of a credential review. If a backup suddenly fails, recheck the endpoint URL, certificate trust, and access key, and confirm your Mac can still reach the cluster from its current network.

Run a Restore Drill on a Quiet Afternoon

Pick a small folder from a recent Ceph snapshot and restore it into a throwaway directory on your Mac. That dry-run confirms the RGW endpoint, the access key, the repository password, and the OurClone restore flow are all still working together -- and gives the cluster team confidence that the backup pattern actually delivers when it matters.